ChatGPT vs Gemini vs Claude: Which AI is Best for Writers in 2026?

Three AI assistants walk into a bar. The writer asks all three to help with their article. One is verbose and confident. One is thorough but slow. One is sharp and occasionally opinionated.

The question is: which one should you use for your writing?

In 2026, ChatGPT, Gemini, and Claude are the three dominant general-purpose AI tools for writers. Each has a genuinely different character, different strengths, and different failure modes. This comparison is based on real testing across common writing tasks — not benchmarks, but actual output quality on work that writers do every day.

The Short Answer (For Those Who Won’t Read the Full Article)

- Best for creative writing: Claude

- Best for research-heavy writing: Gemini

- Best for fast content at scale: ChatGPT

- Best for beginners: ChatGPT

- Best for long-form depth: Claude

Now let’s explain why.

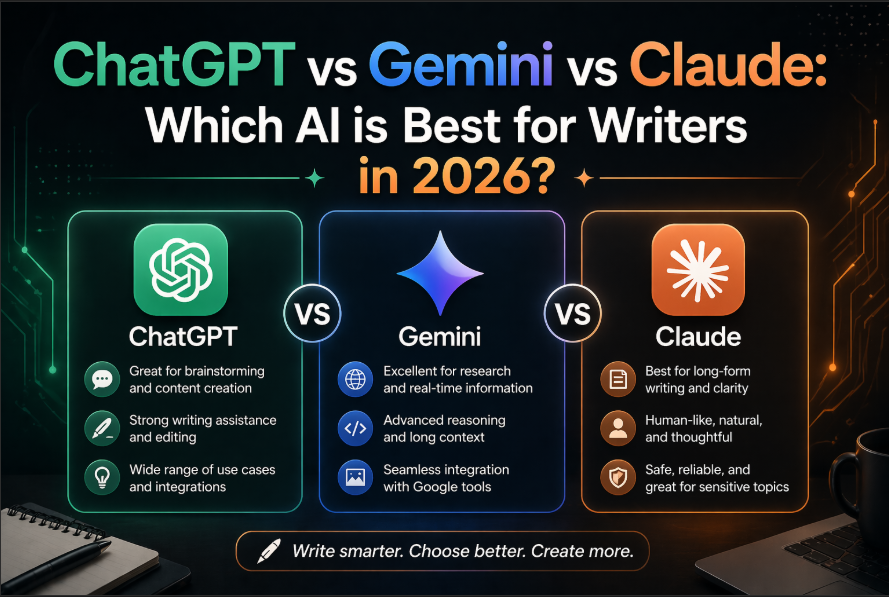

Quick Overview of Each Tool

ChatGPT (OpenAI)

The original mass-market AI assistant. GPT-4o is available on the free tier in 2026, making it the most accessible option. ChatGPT is confident, fast, and great at producing structured content quickly. It occasionally hallucinates facts but has improved significantly. The ecosystem is massive — plugins, custom GPTs, and integrations are everywhere.

Gemini (Google)

Google’s AI assistant with deep integration into Google’s products (Docs, Sheets, Gmail, Drive). Gemini’s biggest edge is real-time web access and its ability to pull in current information. For writers who need research integrated into their workflow, or who live in Google Workspace, Gemini is a powerful choice.

Claude (Anthropic)

Claude is the newcomer that many professional writers have quietly switched to as their primary tool. It’s known for longer context windows (can handle very long documents), more nuanced writing that sounds less “AI-generated,” strong instruction-following, and a tendency toward careful reasoning over confident-but-wrong answers.

Head-to-Head: 6 Common Writing Tasks

Task 1: Writing a Blog Post Introduction

The prompt: “Write an engaging introduction for a blog post about why most small businesses fail in their first year. Avoid clichés. Hook the reader immediately.”

ChatGPT: Produced a solid intro with a clear structure. Slightly formulaic — the kind of intro that’s competent but recognizable as AI-written. Good for content at volume.

Gemini: Wrote a data-heavy intro that cited statistics. Well-structured and informative, but the opening lacked punch. Better suited for B2B or educational content than consumer-facing blogs.

Claude: Produced the most distinctive intro — opened with a specific scenario rather than a statistic, used more precise language, and felt less like a template. Required the least editing.

Winner: Claude for quality; ChatGPT for speed/volume.

Task 2: Research Integration

The prompt: “What are the most common reasons small businesses fail? Include recent data.”

ChatGPT: Gave a solid answer but acknowledged its knowledge cutoff limitation. No real-time data.

Gemini: Pulled in recent statistics from 2025 sources, cited them, and integrated them naturally into a well-structured response. Clear advantage when current data matters.

Claude: Strong analytical response, well-reasoned, but also limited by training data cutoff for very recent statistics.

Winner: Gemini — and it’s not close for research-heavy writing.

Task 3: Editing and Rewriting

The prompt: “Rewrite this paragraph to make it more concise and punchy: [provided a 150-word dense paragraph]”

ChatGPT: Reduced it to 80 words. Clear improvement, but some nuance was lost in the compression.

Gemini: Produced a technically clean rewrite but kept more words than necessary. Good at preserving meaning; not as good at punchy compression.

Claude: Reduced to 75 words while keeping all the key ideas. The word choices were more precise — “significant” became “major,” “in order to” became “to.” The result felt genuinely edited, not just shortened.

Winner: Claude — consistently produces the most editorial-quality edits.

Task 4: Long-Form Content (1,500+ words)

The prompt: “Write a 1,500-word guide to setting up a freelance writing business from scratch.”

ChatGPT: Produced 1,500 words quickly. Well-structured, covered the key points, occasionally generic in advice. Good enough for most purposes.

Gemini: Produced a solid guide with good integration of practical steps. The Google product mentions felt slightly promotional but were generally relevant.

Claude: Produced the most coherent 1,500-word piece — better narrative flow, more specific actionable advice, less reliance on generic bullet points. The writing felt more like a human had thought it through rather than assembled it.

Winner: Claude for quality; ChatGPT for speed.

Task 5: Writing in a Specific Voice or Style

The prompt: “Write a 200-word product description for a premium notebook in the brand voice of Moleskin — understated, slightly poetic, confident.”

ChatGPT: Competent execution. Hit the tone reasonably well but used some slightly generic “premium” language.

Gemini: Struggled slightly with the brand voice specificity. More informative than evocative.

Claude: Best execution. The language was more precise and the tone was more consistently understated. Less reliance on obvious “premium” vocabulary clichés.

Winner: Claude — it handles voice and tone instructions with more nuance.

Task 6: Brainstorming and Ideation

The prompt: “Give me 20 unique angle ideas for an article about remote work in 2026 that haven’t been done to death.”

ChatGPT: Generated 20 ideas quickly. About 8-10 were genuinely fresh; the rest were familiar takes repackaged.

Gemini: Generated 20 ideas with more research grounding — some tied to recent news and data. Good for finding timely hooks.

Claude: Generated 20 ideas with the most unexpected angles — more willing to suggest contrarian or counterintuitive takes. The best ideas on the list came from Claude’s output.

Winner: Tie between ChatGPT (volume) and Claude (originality)

Pricing Comparison (2026)

| Free Plan | Paid Plan | |

|---|---|---|

| ChatGPT | GPT-4o available free (limited) | $20/month (Plus) |

| Gemini | Available free | $20/month (Advanced) |

| Claude | Available free (limited) | $20/month (Pro) |

All three are $20/month at the paid tier, making the decision entirely about which tool serves your workflow better — not price.

Which One Should You Use?

Use ChatGPT if:

- You’re new to AI and want the most beginner-friendly experience

- You need to produce content quickly at volume

- You use custom GPTs or third-party integrations

- You want the largest ecosystem of connected tools

Use Gemini if:

- You live in Google Workspace (Docs, Gmail, Sheets)

- Your writing is research-heavy and needs current data

- You want AI assistance built directly into your existing tools

- You’re writing content that requires up-to-date information

Use Claude if:

- You care about writing quality over writing speed

- You work with long documents (reports, books, long articles)

- You want output that requires less editing to sound human

- You’re doing creative writing, editing, or tone-sensitive work

- You want an AI that reasons carefully rather than answers fast

The Honest Take

Most serious writers who’ve tried all three end up using Claude as their primary writing tool and Gemini as a research companion. ChatGPT remains excellent for quick tasks and when you need the connected app ecosystem.

The good news: all three have free plans that are genuinely useful. You don’t have to commit to any of them. Spend a week testing each on your actual work tasks, and let the output quality guide your decision.

Thinking about leveling up your AI prompting skills? Read: [AI Prompt Tricks That Get 10x Better Results from ChatGPT] — most apply to Claude and Gemini too.

Related Guides on HQTRICK.COM

- [How to Use ChatGPT to Write Emails 10x Faster]

- [Best Free AI Tools for Teachers in 2026]

- [AI Tools for HR Managers That Actually Save Time]

- [5 Claude AI Tricks Most People Don’t Know About]

- [ChatGPT vs Gemini vs Claude: Which AI is Best for Writers?]

- [How to Build a Daily Workflow with AI (No Coding Needed)]

- [Best AI Tools for Freelancers to Earn More in 2026]

- [AI Prompt Tricks That Get 10x Better Results from ChatGPT]

- [How to Replace 5 Hours of Work Daily Using Free AI Tools]